Cooling for Orbital Compute: A Landscape Analysis

Key Takeaways

- Thermal management is the defining engineering bottleneck for orbital compute at every scale.

- Space is cold, but it does not cool things, and the physics of heat rejection in vacuum are fundamentally different from anything used in terrestrial data centers.

- At megawatt scale, radiator mass and area are hypothesized to dominate the entire spacecraft, but the constraint may be more tractable than it first appears.

- At the 10W to 500W per-node range, the thermal problem is largely solved with flight-proven technology that has decades of heritage in LEO.

- Hardware refresh cycles driven by radiation degradation are routine for a smallsat and difficult for a computing megastructure.

The Bottleneck

The orbital compute race entered a new phase in early 2026. SpaceX filed FCC applications for up to one million data center satellites. Google's Project Suncatcher is preparing to launch TPU-equipped prototypes by early 2027. Starcloud flew an unmodified NVIDIA H100 in orbit and trained an LLM in space for the first time. Axiom Space launched its first orbital data center nodes in January 2026.

Every one of these efforts confronts the same engineering bottleneck: thermal management in the vacuum of space.

Voyager Technologies CEO Dylan Taylor put it plainly in an interview this past month: "It's counterintuitive, but it's hard to actually cool things in space because there's no medium to transmit hot to cold." NVIDIA CEO Jensen Huang made the same observation: "It's cold in space, but there's no airflow, and so the only way to dissipate is through conduction."

The cooling conversation tends to collapse everything into one problem. Everything from a 1 GW orbital data center to a 50-watt edge compute node needs to reject heat through radiation. But the engineering required, and the maturity of available solutions, could not be more different at the different ends of that scale.

This is a breakdown of where radiative cooling technology stands in 2026, organized by the scale at which it must operate.

Bring on the Heat

There are three principles define thermal management in orbit.

1. Radiation is the only way out.

On Earth, data centers use convection (moving air or liquid past hot surfaces) to carry heat away. Convective systems remove heat at 2,000+ W/m2 with minimal mass penalty. In the vacuum of space, there is no fluid medium. The only available mechanism is thermal radiation: emitting energy as infrared electromagnetic waves. Everything else in orbital thermal design is built around this constraint.

2. Radiative output is governed by temperature.

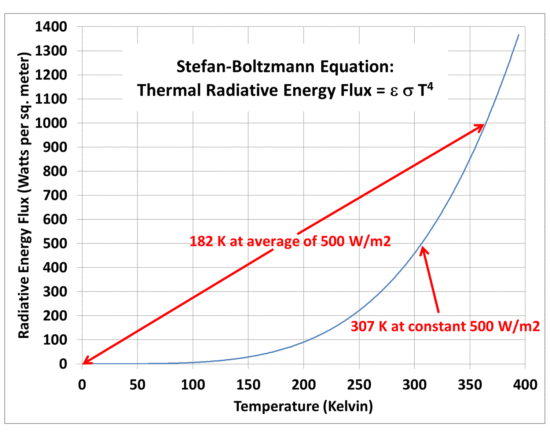

The Stefan-Boltzmann law dictates how much heat a surface can radiate per square meter, and the relationship is steep. A 1 m² surface at 20°C radiates roughly 418 W to deep space (one side) or approximately 770-838 W from both sides.

At 80°C, typical GPU operating temperature, that rises to approximately 850 W per side. At 127°C, the practical upper limit for most electronics, it reaches approximately 1,450 W/m². SemiEngineering's March 2026 analysis offered a useful rule of thumb: rejecting 1 kW of heat takes approximately 2.5 m² of radiator.

3. The sun is the enemy.

A radiator needs a clear view of cold space (2.7 Kelvin (K), the cosmic microwave background). But orbital hardware faces approximately 1,361 W/m² of direct solar radiation, plus Earth albedo and infrared (IR) emission. As the Chinese aerospace publication Xianzao Ketang detailed in February 2026: "On the sun-facing side, the heat radiator may not be able to dissipate heat but may instead become a 'heat absorber.'" This is why radiator orientation, sun-synchronous orbit selection, and spectral-selective coatings (surfaces that reflect solar wavelengths while emitting strongly in mid-infrared) matter as much as raw radiator area.

Megawatt Scale Cooling

At the power levels envisioned by the largest orbital data center concepts (hundreds of kilowatts to gigawatts), radiators risk becoming the dominant mass and area driver of the entire spacecraft.

The International Space Station provides the most mature benchmark. Its External Active Thermal Control System rejects up to 70 kW using 422 m² of ammonia-loop radiators, achieving roughly 166 W/m² in practice, well below theoretical maximums because of solar exposure, Earth IR loading, and system inefficiencies. Scaling that to a 1 GW facility (the scale SpaceX and Google are discussing) would require approximately 3,950 m² of radiator at optimistic operating temperatures, with a mass of 19,750 to 39,500 kg at 5-10 kg/m². One first-principles analysis calculated that at this scale, the thermal management system mass alone exceeds the combined mass of computing equipment, power systems, and structural components.

Mach33 Research challenged this framing in their "Debunking the Cooling Constraint" analysis, using Starlink V3 as a reference platform. Their finding: when scaling a Starlink-class spacecraft from approximately 20 kW (its current communications payload) to approximately 100 kW (compute-optimized), radiators represent only 10-20% of total mass and roughly 7% of total planform area. Solar arrays, not radiators, dominate the spacecraft footprint. At 100 kW, if a platform can accommodate the solar area required for power generation, the additional radiator area is comparatively modest. Their conclusion: radiative cooling at this scale behaves more like an engineering trade-off than a hard physics blocker.

The distinction matters. At individual satellite level (100 kW class), cooling is tractable. At constellation-aggregated levels (100 MW to GW), the radiator mass compounds across thousands of spacecraft, but not to the point of becoming a constraint in contrast to area budget.

Several approaches are being developed for radiator cooling for larger compute satellites:

Passive flat-form-factor designs

Sophia Space's TILE architecture takes a geometry-first approach. Each module is a flat, one-meter-square, few-centimeters-deep compute slab. Processors sit directly against a proprietary passive heat spreader, and the high surface-area-to-volume ratio turns the entire structure into a radiator. A software layer dynamically balances workloads across processors to prevent localized hotspots. The company claims 92% of generated power goes directly to computation. Sophia plans to flight-test on an Apex Space satellite bus by late 2027.

Distributed satellite clusters with optical interconnects

Google's Project Suncatcher envisions constellations of approximately 81 TPU-equipped satellites at approximately 650 km altitude, connected by free-space optical links (1.6 Tbps demonstrated in lab). Google's published research calls for "advanced thermal interface materials and heat transport mechanisms, preferably passive to maximize reliability." Their Trillium TPU v6e was radiation-tested under a 67 MeV proton beam with 10 mm aluminum shielding and survived the radiation levels expected over a five-year LEO mission.

Pumped fluid loops and active thermal control

For individual satellites running tens of kilowatts and above, mechanically pumped fluid loops (MPFLs) circulate coolant through cold plates to collect heat and transport it to external radiators. This is proven, flight-heritage technology. China's Shenzhou spacecraft and Chang'e 3 lander both used MPFL systems. The trade-off is mechanical complexity: pumps can fail, fluid loops can leak (the ISS has experienced ammonia leaks), and every component adds mass.

Liquid Droplet Radiators (LDRs)

This is the most promising advanced concept for high-power thermal rejection. Instead of solid panel radiators, an LDR generates a controlled sheet of microscopic droplets (approximately 100 micron diameter) that radiate heat as they travel through vacuum, then recollects them. NASA research dating to the 1980s showed LDRs can be up to seven times lighter than conventional radiators. A November 2025 study in Applied Thermal Engineering demonstrated heat dissipation rates of up to 450 W/kg. LDRs remain developmental, with ongoing research from Chinese, Russian, and U.S. groups on droplet collection in microgravity, fluid contamination, and solar back-loading.

ESA's ASCEND program validated the thermal feasibility of orbital data centers in its June 2024 study results and added a footnote: lifecycle carbon accounting only works if launcher emissions drop by approximately ten times. Their roadmap targets a 50 kW proof of concept by 2031, scaling to 1 GW by 2050.

Cooling Edge Computing

Below approximately 500 watts per node, the thermal picture shifts fundamentally. The radiator mass problem that dominates megawatt-scale discussions effectively disappears, and the available solutions are proven technologies with decades of operational data.

Body-mounted passive radiation

For payloads dissipating under approximately 100 W, the spacecraft's own structural surfaces serve as the radiator. No deployable panels, no fluid loops, or moving parts required. A 3U CubeSat (approximately 10 cm x 10 cm x 30 cm) has roughly 0.17 m² of external surface area. With appropriate thermal coatings (high emissivity in infrared, low absorptivity in solar wavelengths), that surface can passively reject approximately 70 W. For edge compute payloads running AI inference or cryptographic operations at 10-50 W, body-mounted radiation is often sufficient. NASA's state-of-the-art SmallSat thermal control survey confirms this as a baseline capability for LEO missions.

Heat pipes for moderate loads

When compute loads push into the 50-500 W range, heat pipes and loop heat pipes (LHPs) transfer heat from the processor to the best-positioned radiating surface through phase change of a working fluid, commonly ammonia. SpaceComputer, for example, uses passive cooling with radiators mounted on its processors and cooling pipes that transfer heat to the satellite structure itself, which then radiates to space. The Air Force Research Laboratory developed a scalable thermal management system for CubeSats-to-ESPA-class spacecraft that handles up to 1 kW of waste heat, combining additive-manufactured heat pipes, phase change material accumulators, and roll-out deployable radiators. Georgia Tech's Low-Gravity Science and Technology Lab is pushing further with magnetohydrodynamic (MHD) liquid-metal loops for CubeSats that require no moving parts at all, demonstrating heat-transfer rates of 13.8 W/K in lab prototypes.

Phase change materials as thermal batteries

PCMs (typically paraffin waxes for space applications) absorb heat during peak compute loads and release it during eclipse or idle periods. A 2025 study demonstrated a hybrid PCM plus AI-tuned controller approach that reduced peak-to-peak thermal variation by approximately 25% in CubeSat applications. This matters for architectures that process workloads in bursts across a constellation rather than running sustained loads on every satellite node like orbital data centers.

The Thermal Management Spectrum

Thermal Managment Is an Architectural Divide

TLDR: the thermal challenge in orbital compute scales non-linearly with power.

At the megawatt-to-gigawatt end, radiative cooling remains an active area of engineering development. The question, as Mach33 framed it, is not whether cooling is physically possible (and it is) but what the optimal operating point is.

At the edge end, the thermal problem is largely solved by passive radiation, heat pipes, and phase change materials are proven technologies with decades of testing. Adding compute nodes to a distributed constellation scales compute capacity without proportionally scaling thermal infrastructure.

The companies that define orbital compute between now and 2030 will be the ones that match their thermal architecture to their compute architecture and ship working systems at the scale where the physics already works. But the landscape will not stand still.

By the end of the decade, many of these will carry flight experience, and a new generation of thermal innovations will have taken their place on the R&D horizon. The thermal constraints that shape orbital compute in 2026 are real, but are not permanent, and the pace of progress suggests the hardest problems in orbital cooling are closer to solved than they appear.

Looking to read deeper on Orbital Data Centers? Get a comprehensive breakdown.

Make sure to follow us on Twitter (X) for more news, insights, and developments in the orbital computing sector.